A therapist investigates the hidden economic loop connecting Instagram, Big Pharma, and therapy apps - owned by the same shareholders.

Something has been bothering me for a while. Not in a vague, existential way - but in the specific, nagging way that means there is a question I haven't answered yet.

I am a therapist. I have been doing this work long enough to recognise patterns. The way a particular kind of anxiety presents itself. The specific geometry of modern shame. And over the past several years, something has changed - not in the people, exactly, but in the texture of the suffering. It has a shape I keep recognising. Almost like it was made somewhere.

According to the World Health Organization's 2025 Mental Health Atlas, more than one billion people are now living with a mental health disorder. One in every eight people on earth. That number has been climbing for a decade.

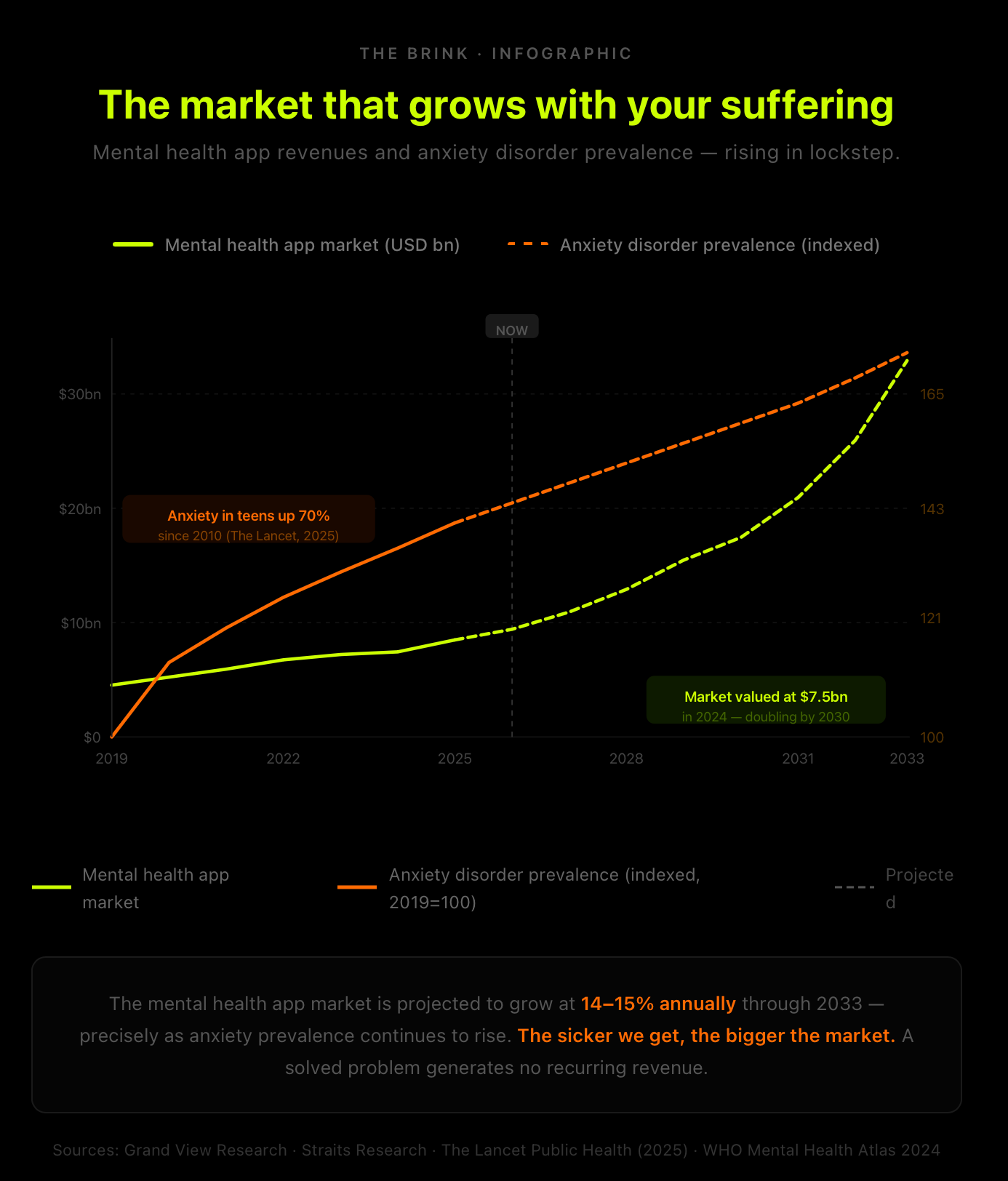

And here is the thing that started pulling at me: at exactly the same moment that mental suffering has been rising, an entire industry has emerged to sell us the cure. The mental health app market alone is now worth $7.5 billion. It is projected to nearly double by 2030.

Two lines going up together. I kept staring at them. And I started to wonder: what if the market for the cure isn't just responding to the suffering - what if it depends on it? What if the system has no interest in the line coming down?

What followed was several months of following a thread. I want to walk you through where it led. Not because I have all the answers, but because what I found was strange enough, and important enough, that I think it needs to be said out loud. Some of it surprised me. Some of it made me angry. Some of it made me sit back and think: of course. How did I not see this before?

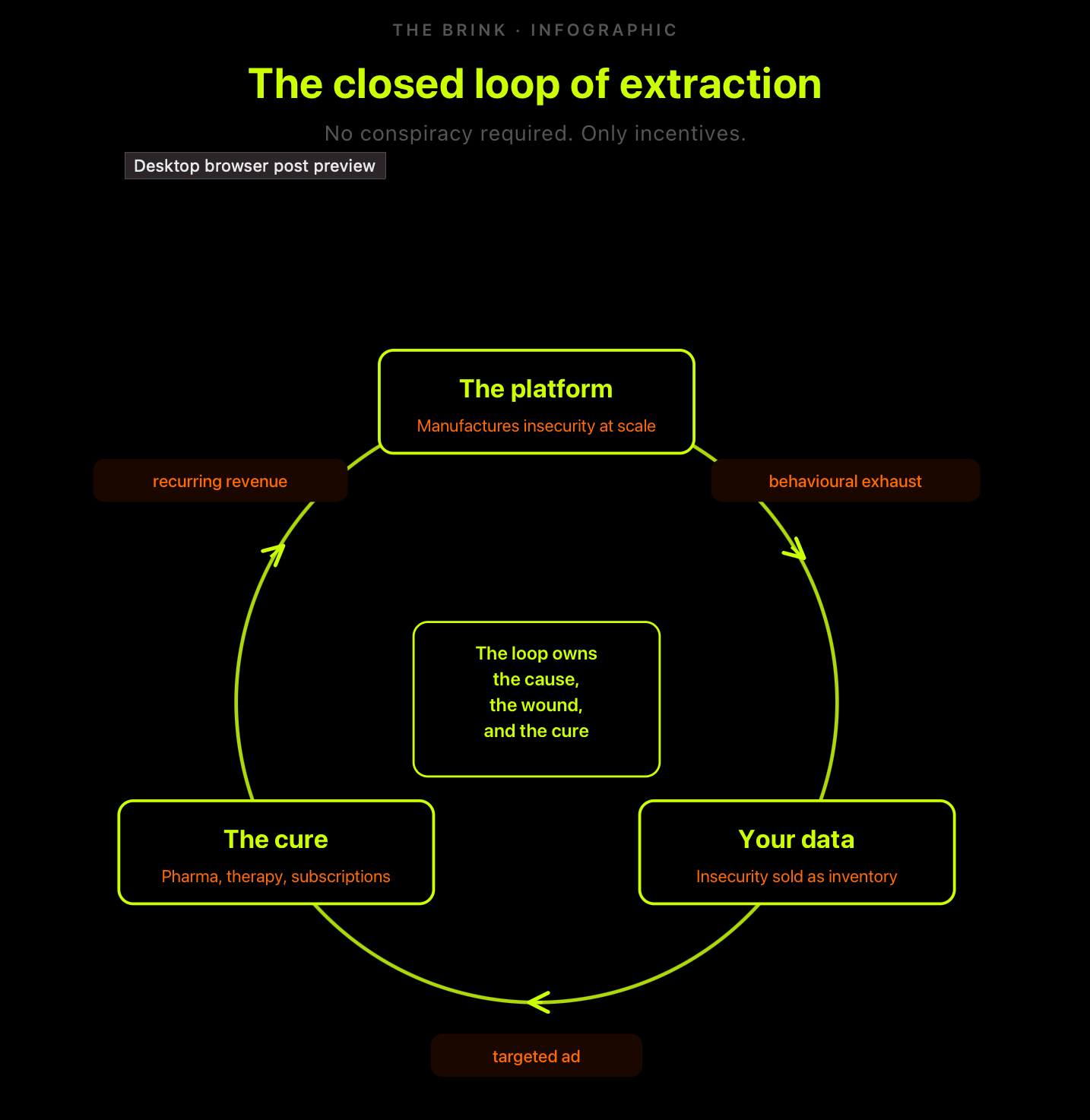

I want to be clear about one thing before we start. This is not a conspiracy theory. There are no secret meetings. No villainous CEOs coordinating in the dark. What I found was something more unsettling than that - a system that doesn't need intent to cause harm. One that produces it structurally, quietly, as a byproduct of ordinary economic logic.

Follow me.

The first strange thing

I want to start with a number that stopped me cold.

More than one billion people are living with a mental health condition. Anxiety disorders among teenagers have risen by nearly 70% since 2010. Depression in the same age group is up 30%. These are not small movements at the margins. This is something happening at scale, across every wealthy nation on earth, at exactly the same historical moment.

Now here is the second number: the companies offering to fix this problem are among the fastest-growing businesses in the world. The mental health technology market was valued at $15 billion in 2024 and is projected to reach $31 billion by 2030. Venture capital has poured hundreds of millions into meditation apps, online therapy platforms, and telehealth services. The sicker we get, the bigger the market gets.

I found myself asking the question that journalists are supposed to ask. Who benefits?

Not from the suffering, necessarily. But from the particular arrangement of things - where the suffering reliably produces customers, and the customers reliably produce revenue, and the revenue reliably produces more of the conditions that created the customers in the first place. Something was clicking into place. But I needed to go further.

The discovery

Here is the idea that I kept circling back to, the one that made the whole picture start to make sense. A solved problem generates no recurring revenue.

If anxiety disappears, therapy subscriptions churn. If insecurity fades, ad targeting weakens. If attention stabilises, engagement metrics collapse. So the system adapted - not through design, not through malice, but through the ordinary logic of incentives.

The most profitable arrangement wasn't solving human problems. It was owning the entire cycle: the cause of the distress, the data produced by that distress, and the products sold back as relief. Not a pipeline. A loop.

I want to give this a name, because naming things is part of what I do. I am going to call it Maintenance Capitalism. The logic is brutal in its simplicity: problem-solving capitalism ends when the problem is fixed. Maintenance capitalism thrives when the problem never quite goes away.

It doesn't want you broken. It doesn't want you well. It wants youperpetually almost okay. Anxious enough to keep clicking and paying. Functional enough to keep working and earning. That is the sweet spot. That is, increasingly, the product.

But I still hadn't found the piece that made it all cohere. The piece that showed it wasn't just parallel industries doing parallel things, but something genuinely interlocking. Something structural. That piece turned out to be hiding in the most boring place imaginable. A filing cabinet. A spreadsheet. A list of shareholders.

The filing cabinet

I want to tell you about the moment the thread became a rope. I had been thinking about the relationship between social media companies and pharmaceutical advertising.

I knew, in a general way, that the two industries had become financially entangled. But I wanted to understand the structure of it - not just the advertising relationship, but the ownership. Who, ultimately, is holding the shares? So I went looking. And what I found was this.

Vanguard holds approximately 6.9% of Meta. BlackRock holds 5.8%. Those are enormous stakes in the company that owns Instagram and Facebook - the machines, as I had come to think of them, that manufacture insecurity at scale.

Then I looked at Eli Lilly. The maker of Mounjaro and Zepbound, the GLP-1 drugs being prescribed in growing numbers to people with body image distress. Vanguard holds 8.5% of Eli Lilly. BlackRock holds 4.4%.

Then I looked at Teladoc Health. The parent company of BetterHelp - the world's largest online therapy platform, the subscription service offering relief to the anxious, the distressed, the scrolled-into-the-ground. BlackRock is a confirmed 5%+ beneficial owner. Vanguard is its largest institutional shareholder. I sat with that for a while.

The same two asset managers. The platform producing the wound. The drug treating the symptom. The therapy service managing the fallout. Three entirely different industries, three entirely different products - and running quietly through all of them, the same shareholder family.

We are taught to think of corporations as competitors. Meta versus Eli Lilly. Tech versus pharma. Social media versus mental health. That framing collapses the moment you look at who actually owns the shares. From the vantage point of Vanguard and BlackRock, who together manage approximately $20 trillion in assets - more than the GDP of China - these aren't opposing forces. They are adjacent revenue streams in the same portfolio.

Under traditional capitalism, a shareholder in BetterHelp should want Instagram to be less toxic. Fewer mentally unwell people means fewer therapy subscribers. The incentive should point toward less harm.

But under what economists call universal ownership, the incentive flips. When you own the platform causing the harmand the service treating it, you don't need the harm to stop. You need the traffic to keep flowing. The more damage Instagram does, the more people need BetterHelp. You win either way.

I keep coming back to this when I think about the people I see. The 3am scrolling. The shame spiral. The particular modern anxiety that feels almost algorithmic in its shape. And I think: somewhere, in a portfolio that contains both the thing that did this and the service trying to undo it, there is a number going up. Not because anyone planned it that way. Because no one needed to.

The body and the drug

I want to show you how the loop works in practice. In the most concrete, transactional terms I can manage.

Here is something worth knowing about Eli Lilly, the pharmaceutical company whose shareholders include Vanguard and BlackRock. They are not primarily a weight-loss drug company. They are the world's largest manufacturer of psychiatric medications. Prozac. Cymbalta.

Decades of antidepressants, anxiolytics, antipsychotics. Their business, in a very real sense, is mental suffering. And for several years, that business has been learning to find its customers where they live: on their phones, on their feeds, in the specific algorithm-shaped moments of maximum vulnerability.

Research published in peer-reviewed journals has shown that social media platforms can use machine learning to identify users experiencing depression or anxiety from their posted content - the language they use, the content they engage with, the patterns of their scrolling - and can serve them targeted mental health advertising accordingly.

The platforms frame this as helpful. Early intervention. Connecting people who need help with the services that offer it. That framing is not entirely wrong. But it is not entirely right either.

Because here is the thing I kept turning over. The platform that is using your engagement data to identify your distress is the same platform that has been engineering that distress for profit.

A landmark legal case in 2025 found that Meta and YouTube made deliberate design choices - including infinite scroll - that borrowed directly from behavioural techniques used by the gambling and cigarette industries to maximise engagement. The court agreed: the harm was not incidental. It was structural.

And then, from that manufactured distress, an ad appears. For an antidepressant. For a therapy subscription. For a telehealth platform that can prescribe something to take the edge off. It arrives at exactly the moment of maximum vulnerability. It doesn't feel targeted. It feels, somehow, personal. Inevitable. Like the feed finally understood you.

The same dynamic operates in the body image space too. The algorithm amplifies comparison and insecurity, the data gets packaged and sold to advertisers, and an ad for a GLP-1 weight loss drug arrives precisely when you've been watching fitness content for forty minutes. Pharmaceutical companies spend approximately $20 billion a year on digital advertising in the US alone, the majority flowing to the platforms that have already identified the wound.

This is the part that I find professionally difficult to sit with. Because what I have just described is a manipulation sequence. Input: distress. Process: algorithmic amplification. Output: pharmaceutical conversion. The user experiences it as a journey toward help. The system experiences it as a pipeline.

And here is the detail that perhaps should alarm us most: Meta runs a dedicated "Health Care Alliance", convening monthly with pharmaceutical advertisers to optimise their reach on Instagram and Facebook. This is not a passive arrangement. The platform is actively building infrastructure around the relationship.

The social media companies are, in a very real sense, now financially dependent on pharmaceutical advertising revenue. If the mental health crisis were genuinely addressed - if the algorithm stopped amplifying harm - that revenue would not dip. It would collapse.

The cure depends on the wound. The wound depends on the feed. The feed depends on the cure's ad spend. That is a loop.

Your attention, rented back to you

There is a second loop running alongside the first. Quieter, in some ways. More personal.

Short-form video didn't just entertain us. It retrained us. Endless scroll conditioned the brain to reject depth in favour of novelty, stillness in favour of stimulation. What emerged wasn't a clinical epidemic of ADHD - but something adjacent and harder to name. Fractured focus. Restless cognition. What some researchers have started calling "popcorn brain" - a mind so habituated to rapid digital input that the ordinary texture of life starts to feel intolerably slow.

Then I found this, and I genuinely had to read it twice: TikTok partnered directly with Headspace to offer meditation to its users. The platform that fragmented your attention span is offering you, for a monthly subscription, the tools to manage the fragmentation it created. You lose focus for free. You buy it back monthly. The deficit is the product. The subscription is the solution. And the same investment ecosystem profits from both ends of the transaction.

I notice this in my own life more than I would like. The phone before bed. The slight, specific unease that follows. The automatic reaching for something - an app, a podcast, a scroll - to smooth the edge left by the previous scroll. I know, intellectually, what is happening. I have written about it. I still do it.

That is the point. Understanding the loop doesn't automatically free you from it. But it does change your relationship to it. And that, I have come to think, is where something starts.

The most troubling thing I found

By this point in my investigation, I had a working theory. A loop. A set of owners. A mechanism. But I kept bumping into the same question, the one I suspected a reader would also ask. Why doesn't someone just stop it?

I thought, naively perhaps, that the answer would be political. Regulatory capture. Lobbying. The usual machinery of power protecting itself. The real answer was stranger than that. And in some ways more troubling.

BlackRock and Vanguard together manage approximately $20 trillion in assets - more than the GDP of China. But I needed to understand how that money works. Because it isn't managed the way you might imagine a fund manager works, sitting at a desk making deliberate choices about which companies deserve investment.

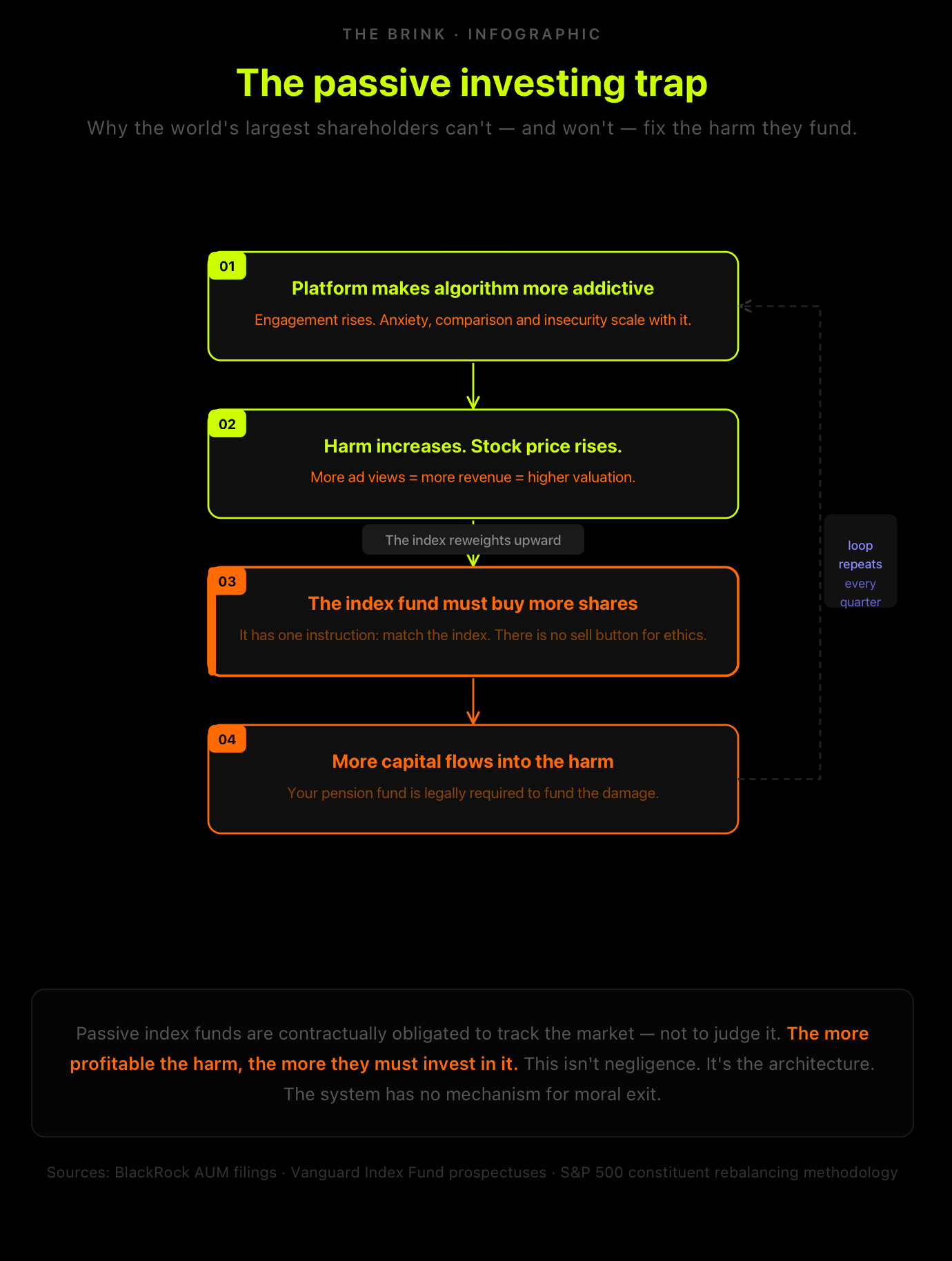

The vast majority of BlackRock and Vanguard's money sits in something called passive index funds. To understand what that means, you need to understand what an index is. An index - like the S&P 500 - is simply a list of companies, ranked by their size.

A passive index fund's entire purpose is to hold shares in every company on that list, in proportion to their size. It doesn't evaluate the companies. It doesn't judge them. It just holds them, automatically, in the right ratios.

This is why these funds are so cheap to run - there are no analysts deciding which companies are good or bad. An algorithm does it. Match the weighting. Track the index. Buy everything on the list.

Here is where it gets disturbing. If Meta makes its algorithm more addictive, and teen anxiety rises, and Meta's stock price rises with it - the index fund is legally required to buy more Meta. Because Meta's position in the index has grown.

The algorithm must match the new weighting. The harm made the stock go up. The stock going up triggers a mandatory purchase. There is no sell button for ethics. No override. The system cannot, by design, register the difference between profitable and good.

We have built, without quite intending to, a financial doomsday machine that is contractually obligated to fund our own distress - as long as it remains included in the S&P 500.

I keep thinking about how to explain the emotional weight of that realisation. It isn't that a villain is making these choices. It's that the system has been constructed so that no one is making them. The harm runs on autopilot.

Could BlackRock and Vanguard use their enormous shareholdings to push for change? In theory, yes. As major shareholders, they have the right to vote at company AGMs - to support or oppose proposals about how companies are run.

BlackRock publishes annual stewardship reports that speak earnestly of "engaging" with companies on social issues. But engagement, in practice, usually means a letter or a meeting. And in recent years, both BlackRock and Vanguard have actually been reducing their support for shareholder resolutions on social and environmental issues - not increasing it.

The political pressure against so-called "ESG investing" (the idea that environmental, social and governance factors should inform investment decisions) has made active moral pressure financially inconvenient for them.

The term for this, I learned, is rational apathy. It is not in any individual actor's financial interest to fix the problem. So no individual actor fixes the problem. The incentives align - not toward harm exactly, but toward a perfect, structural indifference to it. Which, when the harm is a billion people suffering, produces the same result.

Why I'm telling you this

I want to be honest about something. When I first started pulling on this thread, I felt the weight of it. There is a particular kind of despair that comes from understanding a system - from seeing the mechanism clearly - and recognising how large and interlocking it is. How far above the level of individual choice it operates.

I know that feeling. I have watched it move through people I work with. The moment when awareness tips from clarifying to crushing. When knowing the name of the thing you're up against makes it feel more immovable, not less. I am telling this story anyway. Not despite that weight, but because of it.

Because the alternative - not naming it, allowing the suffering to remain individualised, letting people believe that their anxiety is purely a private failure - serves the system perfectly. Maintenance capitalism depends on you not seeing the loop. It depends on the wound feeling personal and the cure feeling like your own idea. The moment you can see the architecture, something shifts. Not everything. But something.

The WHO's 2025 data shows that median government spending on mental health remains at just 2% of total health budgets - unchanged since 2017. Meanwhile, the mental health app market is projected to grow by 15% a year. The public system is starved. The private market is booming. These two facts are not unrelated.

The brilliance of this system is that it requires no conspiracy. No secret meetings between the CEO of Meta and the CEO of Eli Lilly. No coordinated malice. The shared shareholders simply demand growth from every company they own. The easiest way for platforms to grow is to destabilise you. The easiest way for pharma and therapy to grow is to treat that destabilisation. The invisible hand of the market aligns itself. It stopped being a hand a while ago. It became a closed fist.

What can actually change

I want to end here carefully. Not with false hope. Not with a list of apps to delete and supplements to take - that would be, given everything I've just argued, somewhat ironic. But defiance is not the same as denial, and clarity is not the same as despair.

There are things that are genuinely within reach. Mandatory separation of advertising revenue from algorithmic amplification would break the financial dependency between platform harm and pharmaceutical spend. Meaningful universal ownership accountability - requiring that asset managers vote their shares in the interests of human wellbeing, not just portfolio throughput - would change the incentive structure at the root.

Public investment in mental health infrastructure at the scale the crisis demands, rather than outsourcing it to venture-backed subscription platforms, would begin to build something that doesn't depend on your ongoing suffering for its business model.

None of this is simple. All of it is political. Most of it will be resisted, hard, by the entities with the most to lose.

But here is what I keep returning to, in the room where I do my work: people are more resilient than the system needs them to be. The loop depends on a particular kind of passivity - a scrolling, consuming, slightly-unwell passivity that never quite tips into either collapse or recovery.

The moment someone sees themselves clearly, understands what is being done to their attention and their self-worth, and decides to want something different - that is a moment the loop cannot easily monetise. I have watched that moment happen. In my office, and in myself. It is not a revolution. It is not a solution. But it is a start.

Naming the weather doesn't make it stop raining. But it does mean you can stop blaming yourself for being wet.

Matt Hussey is a therapist, journalist, and editor of The Brink - a publication on psychology, culture, and technology.